Lesson 4 - Optimal Transport and Schrödinger Bridges

What Schroedinger has to do with moving dirt

Optional reading for this lesson

Slides

Video (soon)

In this lecture, we explore the deep connections between optimal transport theory, Schrödinger bridges, and stochastic differential equations. We start with the Schrödinger bridge problem—a question about the most likely path of a diffusion process—and discover how it naturally connects to optimal transport through entropic regularization. This unified framework provides powerful tools for generative modeling, sampling, and domain adaptation in modern machine learning.

- Optional reading for this lesson

- Slides

- 1. The Schrödinger Bridge Problem

- 2. From Schrödinger Bridges to Optimal Transport

- 3. Solving the Schrödinger Bridge: Iterative Proportional Fitting

- 4. Generative Modeling via Schrödinger Bridges

- 5. Sampling and Bayesian Inference

- 6. Domain Adaptation and Transport

- 7. Future Directions and Open Questions

- Credits

1. The Schrödinger Bridge Problem

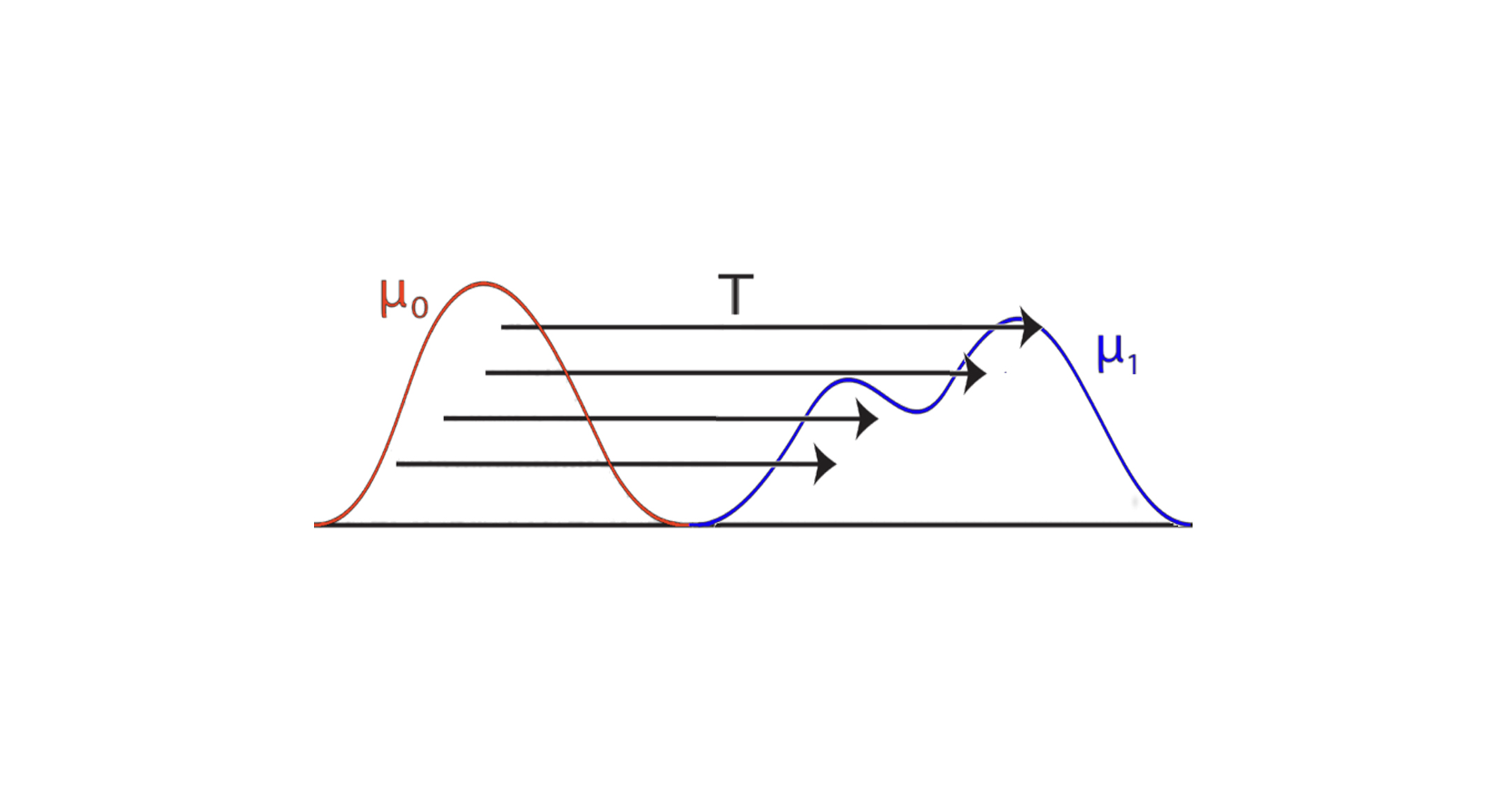

We begin with a problem introduced by Erwin Schrödinger in 1931: given the initial and final marginals and of a diffusion process, what is the most likely path that the process could have taken? This seemingly abstract question turns out to have profound implications for modern machine learning.

1.1 Problem Formulation

Consider a reference diffusion process (e.g., Brownian motion) on the time interval :

with initial distribution . The Schrödinger bridge problem seeks a new process:

with the same diffusion coefficient but a modified drift , such that:

- (initial constraint)

- (final constraint)

- The process minimizes the relative entropy (KL divergence) with respect to the reference process

The relative entropy between two path measures and is defined as:

if ( is absolutely continuous with respect to ), and otherwise.

1.2 Solution via h-Transform

The solution to the Schrödinger bridge problem can be expressed using the h-transform we saw in the previous lecture. Specifically, the optimal drift is:

where is a solution to the Schrödinger system of PDEs:

with boundary conditions:

- for all (terminal condition for backward equation)

- for all (initial condition for forward equation)

- The product gives the marginal density at time

Derivation via Variational Calculus: The optimal drift can be derived by considering the first-order optimality conditions for the constrained optimization problem. Using Lagrange multipliers and for the marginal constraints, the Euler-Lagrange equations yield the Schrödinger system.

Connection to h-Transform: The connection becomes clear when we write the transition density of the bridge process:

where is the transition density of the reference process. This is exactly the h-transform formula, where serves as the harmonic function that modifies the reference process to satisfy the boundary conditions.

Uniqueness: Under mild regularity conditions (e.g., the reference process has positive transition densities), the Schrödinger system has a unique solution, ensuring the uniqueness of the bridge process.

1.3 Why This Matters for Machine Learning

The Schrödinger bridge problem provides a principled framework for several key ML tasks:

- Generative Modeling: Bridge from a simple noise distribution to complex data distributions

- Sampling: Transport samples from a tractable prior to an intractable posterior

- Domain Adaptation: Smoothly transport samples between different domains

- Interpolation: Create smooth paths between distributions

By constraining both endpoints, we obtain a unique, optimal transport path that minimizes the “effort” (measured by KL divergence) required to transform one distribution into another. This variational characterization ensures that the bridge process is the most likely path connecting the two distributions under the reference measure.

2. From Schrödinger Bridges to Optimal Transport

The connection between Schrödinger bridges and optimal transport is profound. As the noise level , the Schrödinger bridge converges to the optimal transport solution. Understanding this connection reveals why entropic regularization is so powerful.

2.1 The Monge Problem (1781)

The original optimal transport problem, formulated by Gaspard Monge, asks: given two probability measures and on , find a transport map that pushes forward to (i.e., ) while minimizing the transport cost:

where is a cost function. Common choices are:

- Quadratic cost: (leads to 2-Wasserstein distance)

- Linear cost: (leads to 1-Wasserstein distance)

The pushforward notation means that for any measurable set , we have , or equivalently, for any test function :

2.2 The Kantorovich Relaxation (1940s)

The Monge problem may not have a solution (the infimum might not be attained, or the constraint may be too restrictive). Léonard Kantorovich relaxed this by considering transport plans (couplings) instead of transport maps.

A coupling is a joint probability measure on with marginals and , meaning:

- (first marginal)

- (second marginal)

The Kantorovich problem is:

where is the set of all couplings with marginals and . The value is called the -Wasserstein distance.

For , we get the 2-Wasserstein distance , which has particularly nice properties:

- It metrizes weak convergence of probability measures

- It has a dynamic formulation (Benamou-Brenier)

- It connects naturally to SDEs and diffusion processes

2.3 Entropic Regularization: The Bridge Between Two Worlds

The connection between Schrödinger bridges and optimal transport emerges through entropic regularization. Instead of solving the exact Kantorovich problem, we add an entropy penalty:

where is the relative entropy with respect to the product measure , and is a regularization parameter.

As , the entropically regularized problem converges to the original optimal transport problem:

2.4 The Unification: Schrödinger Bridge as Entropic OT

The Schrödinger bridge problem is exactly the entropically regularized optimal transport problem! This is a profound connection that unifies two seemingly different problems.

Theorem (Léonard, Mikami): The Schrödinger bridge problem with reference process (Brownian motion) and regularization parameter is equivalent to the entropically regularized optimal transport problem with quadratic cost.

Unified Formulation: Let us see how these two threads merge. Consider:

Optimal Transport (Kantorovich): Minimize transport cost over couplings:

Entropic Regularization: Add entropy penalty for computational tractability:

Schrödinger Bridge: Minimize path-space KL divergence:

where is the set of path measures with marginals and , and is the reference Brownian motion measure.

The Equivalence: The Schrödinger bridge problem can be reformulated as:

where the regularization parameter is the variance of the Brownian motion.

Mathematical Derivation: To establish this equivalence, we need to show how the path-space KL divergence relates to the coupling-space formulation. The connection comes from several mathematical facts:

Path-to-Coupling Mapping: The path measure induces a coupling via its time-0 and time-1 marginals:

KL Divergence Decomposition: For a Brownian motion reference with , the KL divergence decomposes as: \begin{align} \mathcal{H}(\mathbb{Q} \| \mathbb{P}^R) &= \mathbb{E}_{\mathbb{Q}}\left[\log \frac{d\mathbb{Q}}{d\mathbb{P}^R}\right] \\ &= \int_0^1 \mathbb{E}_{\mathbb{Q}}\left[\frac{1}{2\sigma^2}\|\tilde{b}_t\|^2\right] dt + \text{(boundary terms)} \end{align} where is the drift of the bridge process. Using Girsanov’s theorem and the fact that the bridge minimizes KL divergence, this can be shown to equal: where depends only on the fixed marginals and .

Optimality Conditions: The minimizer of the path-space problem satisfies the Schrödinger system, which in turn implies that the induced coupling minimizes the entropically regularized transport cost.

The detailed proof involves stochastic calculus, Girsanov’s theorem, and the theory of large deviations. The essential observation is that:

- The path measure induces a coupling via its time-0 and time-1 marginals

- The KL divergence decomposes into:

- A transport cost term:

- An entropy term:

- Terms that depend only on the marginals (which are fixed by the constraints)

Limit Behavior: In the limit , the entropy penalty vanishes, and we recover the original optimal transport problem:

This shows that Schrödinger bridges provide a stochastic regularization of optimal transport, where the noise level controls the trade-off between:

- Exactness: Smaller gives solutions closer to optimal transport

- Smoothness: Larger gives smoother, more regularized solutions

- Computational tractability: Larger makes the problem easier to solve

This connection was formalized by Mikami, Léonard, and others (see Léonard 2014), establishing the deep mathematical relationship between these two problems.

2.5 Dynamic Formulation: Benamou-Brenier

The static optimal transport problem can be reformulated dynamically using fluid dynamics. The Benamou-Brenier formulation expresses the 2-Wasserstein distance as:

subject to:

- Continuity equation:

- Boundary conditions: ,

where is the density at time and is the velocity field.

Mathematical Interpretation: This formulation can be understood through the lens of optimal control theory. The velocity field acts as a control that transports mass from to , and we seek the control that minimizes the kinetic energy subject to the constraint that mass is conserved (continuity equation).

Connection to Fokker-Planck: This formulation connects optimal transport to the continuity equation, which we’ve seen before in the context of the Fokker-Planck equation. The continuity equation ensures that mass is conserved as it flows from to . In fact, the Fokker-Planck equation can be written as:

where is the drift velocity and the diffusion term represents the stochastic component.

Schrödinger Bridge Dynamic Formulation: For the Schrödinger bridge, we have a similar dynamic formulation but with an additional diffusion term:

The diffusion term represents the stochastic component of the process. In the limit , this reduces to the deterministic continuity equation, recovering the Benamou-Brenier formulation.

Optimal Velocity Field: The optimal velocity field for the Schrödinger bridge is given by:

where solves the Schrödinger system. This can be derived from the first-order optimality conditions of the dynamic formulation.

3. Solving the Schrödinger Bridge: Iterative Proportional Fitting

The Schrödinger bridge can be computed using an iterative algorithm called Iterative Proportional Fitting (IPF), also known as the Sinkhorn algorithm in discrete settings. This algorithm is fundamental to both optimal transport and Schrödinger bridges, and understanding it is crucial for practical applications.

3.1 The IPF Algorithm: Detailed Derivation

IPF alternates between updating the forward and backward transitions to match the boundary marginals. The algorithm is derived from the fact that the Schrödinger bridge solution must satisfy both marginal constraints simultaneously.

Mathematical Foundation: The Schrödinger bridge solution is characterized by three properties:

- Forward constraint: The time-1 marginal is , i.e.,

- Backward constraint: The time-0 marginal is , i.e.,

- Optimality: It minimizes among all measures satisfying these constraints

Variational Characterization: The bridge can be characterized as the solution to:

where is the set of path measures with the prescribed marginals.

Dual Formulation: Using Lagrange multipliers, this constrained optimization problem has a dual formulation. The optimal measure takes the form:

where and are the solutions to the Schrödinger system. IPF can be seen as iteratively solving for these functions.

IPF Algorithm: The algorithm proceeds as follows:

Initialization: Start with the reference process transition densities and set .

Iteration :

- Forward pass (odd iterations): Update to match the final marginal :

- Compute the current time-1 marginal:

- Update the forward transition:

- This ensures the new measure satisfies

- Backward pass (even iterations): Update to match the initial marginal :

- Compute the current time-0 marginal:

- Update the backward transition:

- This ensures the new measure satisfies

Why This Works: Each iteration has the following mathematical properties:

Monotonicity: The KL divergence decreases monotonically:

This follows from the fact that each update minimizes KL divergence subject to one constraint while maintaining the other.

- Constraint Satisfaction: Each iteration maintains one constraint while updating to satisfy the other:

- Forward pass: Maintains , updates to satisfy

- Backward pass: Maintains , updates to satisfy

- Convergence: The sequence converges to the unique Schrödinger bridge solution . The convergence can be established using:

- The fact that KL divergence is lower-bounded (by 0)

- The compactness of the constraint set

- The uniqueness of the minimizer (which follows from strict convexity of KL divergence)

Rate of Convergence: The convergence rate depends on:

- The “distance” between and , measured by their Wasserstein distance

- The noise level : larger typically leads to faster convergence (the problem becomes more regularized)

- The choice of reference process: different reference processes can lead to different convergence properties

Convergence: The algorithm converges to the Schrödinger bridge solution as . The convergence rate depends on:

- The “distance” between and

- The noise level (larger typically leads to faster convergence)

- The choice of reference process

Connection to Entropic OT: In the discrete case, IPF becomes the Sinkhorn algorithm, which solves the entropically regularized optimal transport problem. This provides a computational bridge between the continuous Schrödinger bridge problem and the discrete optimal transport problem.

3.2 Discrete Case: Sinkhorn Algorithm

In the discrete case with points, the problem becomes finding a coupling matrix that minimizes:

where:

- is the cost matrix with

- and are the marginals (probability vectors)

- is the set of couplings

Optimality Conditions: The optimal coupling has the form where is the Gibbs kernel. The optimality conditions are:

which translate to:

Sinkhorn Algorithm: The algorithm solves these equations iteratively by alternating between:

- Row normalization:

- Column normalization:

In matrix form, this is:

where division is element-wise. The coupling is then given by:

Connection to IPF: This is exactly the discrete version of IPF. Each normalization step corresponds to matching one marginal constraint, and the alternating procedure converges to the optimal coupling that satisfies both constraints simultaneously.

3.3 Convergence and Properties

The IPF/Sinkhorn algorithm has several important properties:

Convergence: The algorithm converges to the unique solution of the entropically regularized problem (see Cuturi 2013).

Computational complexity: Each iteration requires operations. The number of iterations needed depends on and the desired accuracy.

Differentiability: The Sinkhorn distance is differentiable with respect to the input measures, making it suitable for gradient-based optimization in machine learning.

Stability: Entropic regularization makes the problem well-conditioned, unlike the original optimal transport problem which can be ill-posed.

The Sinkhorn algorithm has become a cornerstone of modern ML, enabling optimal transport losses in generative models, unsupervised domain adaptation, Wasserstein GANs with entropic regularization, and differentiable optimal transport layers in neural networks.

4. Generative Modeling via Schrödinger Bridges

The Schrödinger bridge framework provides a natural approach to generative modeling: we bridge from a simple noise distribution to a complex data distribution. Recent work has shown how to learn these bridges using neural networks, leading to powerful generative models that combine the benefits of diffusion models with exact boundary matching.

4.1 Learning Schrödinger Bridges with Neural Networks

The key challenge in applying Schrödinger bridges to generative modeling is that we typically don’t have access to the true data distribution—we only have samples. This motivates learning the bridge using neural networks that approximate the drift functions or score functions.

Wang et al. 2021 introduced a deep learning approach that parameterizes the drift functions using neural networks. Given a reference SDE:

with (data) and (noise), the learned Diffusion Schrödinger Bridge seeks a process:

where is parameterized by a neural network. The network is trained to minimize the path-space KL divergence:

subject to the boundary constraints, where is the path measure of the learned process and is the reference measure.

4.2 Diffusion Schrödinger Bridges: Score-Based Approach

De Bortoli et al. 2021 introduced the Diffusion Schrödinger Bridge (DSB) framework, which combines score-based diffusion models with Schrödinger bridges. This approach learns both forward and backward processes simultaneously.

Given a reference diffusion process (e.g., Ornstein-Uhlenbeck):

the DSB learns both:

- Forward process: that pushes to

- Backward process: that pushes to

The training objective combines forward and backward score matching:

where and are the marginals of the forward and backward processes, respectively.

Advantages over standard diffusion models:

- Exact boundary matching: Unlike standard diffusion models, DSB exactly matches both boundary distributions

- Bidirectional training: Training both directions can improve sample quality

- Fewer steps: Can require fewer diffusion steps than standard models

4.3 DSB Matching: Implementing IPF in Continuous Time

Shi et al. 2023 introduced DSB Matching, which improves upon DSB by using a matching-based training procedure that directly implements IPF in the continuous setting.

Instead of training forward and backward processes separately, DSB Matching alternates between:

- Training the forward process to match the backward process

- Training the backward process to match the forward process

This creates a “matching” procedure that mirrors the IPF algorithm, leading to faster convergence and better sample quality. The connection between IPF’s alternating updates and alternating training steps makes the theoretical algorithm directly implementable in practice. Specifically, each IPF iteration corresponds to a training step where one process is updated to match the other, creating a natural correspondence between the discrete IPF algorithm and continuous-time neural network training.

4.4 Practical Considerations for Generative Modeling

When applying Schrödinger bridges to generative modeling, several practical considerations arise:

Score estimation: Need accurate estimates of . This can be done using score matching networks, denoising score matching, or sliced score matching.

Discretization: The continuous-time process must be discretized. Common schemes include Euler-Maruyama, Heun’s method, and Runge-Kutta methods.

Computational cost: IPF iterations can be expensive. Recent work uses neural network approximations, unrolled optimization, and learned initialization to reduce cost.

Scalability: High-dimensional problems require dimensionality reduction, sliced optimal transport, or minibatch approximations.

5. Sampling and Bayesian Inference

The Schrödinger bridge framework provides a powerful approach to sampling from complex distributions, particularly in Bayesian inference. By bridging from a tractable prior to an intractable posterior, we can generate samples efficiently.

5.1 The Sampling Problem in Bayesian Inference

In Bayesian inference, we often need to sample from a posterior distribution:

where:

- is the prior

- is the likelihood

- is the posterior

The posterior is typically intractable, requiring approximate inference methods. Traditional approaches include MCMC, variational inference, and Langevin dynamics.

5.2 Stochastic Gradient Langevin Dynamics

Welling & Teh 2011 introduced Stochastic Gradient Langevin Dynamics (SGLD), which combines stochastic gradient descent with Langevin dynamics for Bayesian learning. This work received the ICML 2021 Test of Time Award for its lasting impact.

Algorithm: SGLD updates parameters using:

where:

- is a step size (typically decreasing)

- is Gaussian noise

- is approximated using mini-batches

Connection to Schrödinger bridges: SGLD can be seen as simulating a diffusion process that converges to the posterior distribution. The Schrödinger bridge framework provides a principled way to:

- Initialize the process from a simple prior distribution

- Transport samples to the posterior distribution

- Control the path to minimize computational cost

5.3 Maximum Likelihood Approach to Schrödinger Bridges

Vargas et al. 2021 proposed solving Schrödinger bridges using maximum likelihood estimation. Their approach expresses the bridge problem as a maximum likelihood problem over path measures.

Mathematical Formulation: Given observed paths from the bridge process, the maximum likelihood estimator maximizes:

subject to the constraints that the marginals match and . Using the factorization of the path measure:

and the fact that the bridge minimizes KL divergence, this can be reformulated as alternating between:

- Maximizing likelihood of the forward process:

- Maximizing likelihood of the backward process:

- Enforcing marginal constraints via Lagrange multipliers or penalty methods

This approach connects Schrödinger bridges to the IPF algorithm—each alternating step corresponds to an IPF iteration—and provides a practical training procedure that naturally fits into the Bayesian inference framework. The likelihood-based formulation also enables the use of standard optimization techniques and provides natural uncertainty quantification.

5.4 Practical Sampling from Schrödinger Bridges

Strømme 2023 focused on the practical aspects of sampling from learned Schrödinger bridges. The paper addresses:

- Discretization schemes: How to discretize the continuous-time bridge for numerical simulation

- Score estimation: Accurate estimation of the score functions and

- Sampling efficiency: Reducing the number of function evaluations needed

The paper provides detailed algorithms and implementation details for sampling from Schrödinger bridges, making them practical for real-world applications. This is particularly important for Bayesian inference, where we need to generate many samples efficiently.

5.5 Advantages of Schrödinger Bridges for Sampling

The Schrödinger bridge framework provides several advantages over traditional MCMC methods:

- Parallelizable: Can generate multiple independent samples in parallel

- Controlled path: The bridge provides a smooth interpolation between distributions

- Exact boundary matching: Unlike MCMC, exactly matches the target distribution at the endpoint

- Flexible initialization: Can start from any tractable distribution, not just the prior

Setup for Bayesian Sampling:

- Initial distribution: (prior)

- Final distribution: (posterior)

- Reference process: Brownian motion or Ornstein-Uhlenbeck process

The Schrödinger bridge gives us a diffusion process that:

- Starts from the prior

- Ends at the posterior

- Minimizes the “effort” (KL divergence) required

6. Domain Adaptation and Transport

Schrödinger bridges also enable domain adaptation by transporting samples from a source domain to a target domain. This application leverages the smooth interpolation properties of the bridge to preserve structure while adapting to new domains.

6.1 Domain Adaptation via Optimal Transport

Problem: Given:

- Source distribution: (e.g., synthetic data)

- Target distribution: (e.g., real data)

Find a transport map that transforms samples from to .

Solution: Use the Schrödinger bridge with:

- Reference: Brownian motion or learned reference process

The bridge provides a stochastic transport that:

- Preserves the structure of the data

- Smoothly interpolates between domains

- Can be learned from unpaired samples

6.2 Applications

Domain adaptation via Schrödinger bridges has found applications in:

- Style transfer: Transfer artistic style between images while preserving content

- Synthetic-to-real: Adapt synthetic data to match real data distributions for training

- Cross-domain generation: Generate samples in one domain conditioned on another

- Unsupervised domain adaptation: Learn transport maps without paired data

The key advantage is that the bridge provides a principled way to transport between distributions while maintaining smoothness and structure, which is crucial for preserving semantic information during domain transfer.

7. Future Directions and Open Questions

The connections between optimal transport, Schrödinger bridges, and SDEs have opened up numerous research directions:

7.1 Scalable Algorithms

Making IPF/Sinkhorn efficient for large-scale problems remains an active area of research. Recent work explores:

- Neural network approximations to avoid explicit IPF iterations

- Unrolled optimization to learn initialization strategies

- Minibatch approximations for high-dimensional problems

7.2 Neural Optimal Transport

Learning transport maps with neural networks is a growing field, combining the theoretical foundations of optimal transport with the flexibility of deep learning.

7.3 Conditional Bridges

Extending Schrödinger bridges to condition on additional information (e.g., class labels, text descriptions) enables controlled generation and more flexible applications.

7.4 Multi-Marginal Problems

Extending to more than two distributions opens up applications in multi-domain adaptation, trajectory inference, and other settings where we need to transport between multiple distributions.

7.5 Geometric Deep Learning

Optimal transport on manifolds and graphs is an emerging area that could enable applications in computational biology, network analysis, and other domains with non-Euclidean structure.

Credits

Much of the mathematical content is based on:

- Optimal Transport Notes (Cambridge) by John M. Ball

- Léonard 2014 - Survey of the Schrödinger problem

- Cuturi 2013 - Sinkhorn distances

- Peyré & Cuturi 2019 - Computational Optimal Transport

- Welling & Teh 2011 - SGLD (ICML 2021 Test of Time Award)

Recent developments in diffusion Schrödinger bridges:

- Wang et al. 2021 - Deep generative learning via SB

- Vargas et al. 2021 - Solving SB via ML

- De Bortoli et al. 2021 - Diffusion SB

- Shi et al. 2023 - DSB Matching

- Strømme 2023 - Sampling from SB

Title image from Wikipedia.